SYNCRO

RESEARCH

PHYSICAL AI

5 mins

|

02 Apr 2026

What began as a demonstration for IISc Open Day became something much more. Two researchers at one of India's most prestigious institutions took a fundamental question , can a robot learn to move the way a human does? and turned it into a working system. They didn't just simulate it. They built it, tested it, and validated it on a real industrial cobot.

The cobot was Syncro 5 by Addverb. And the project, now open source, offers a glimpse of what becomes possible when students get access to real hardware, real problems, and the freedom to push boundaries.

Addverb's Belief: Real Learning Begins with Real Systems

India produces some of the world's most talented engineers. But most of them graduate without ever having programmed a robot that isn't simulated. They write code for virtual arms in environments where nothing ever breaks, nothing ever drifts, and no motor ever overshoots. Then they enter industry and the gap is jarring.

At Addverb, we believe robotics education needs a fundamental reset. Not more coursework. More contact with reality. Our vision for academic collaboration is built on five principles:

Go beyond simulations. Real learning begins with real systems.

Create moments of wonder. We invite students and institutions into our facilities to see robots operating at scale not in isolation, but as integrated systems solving real logistics problems.

Bring the factory into the classroom. Through partnerships like NAMTECH, we've built advanced Robotics & Automation Labs where quadrupeds, cobots, AMRs, carton shuttles, vertical sortation systems, and robotic sorters operate together as a live industrial site, not a showcase.

Teach systems, not silos. Integration, troubleshooting, and optimisation as they happen in the real world not as isolated lab exercises.

Build confidence through experience. Engineers grow by working independently with robots in real environments. That confidence can't be simulated.

Syncro 5: Built for the Classroom, Ready for the Factory

Syncro 5 is Addverb's collaborative robot arm designed from the ground up to serve three distinct roles in academia and industry:

• A teaching instrument. Professors can use Syncro 5 to demonstrate robotics principles with a system that behaves exactly as it would on an industrial floor. No shortcuts, no dumbed-down interfaces.

• An experimentation platform. Students can program, test, and iterate on Syncro 5 for coursework, capstone projects, and independent research with access to Addverb's open-source libraries at community.addverb.ai.

• A research and prototyping tool. For labs and research centres, Syncro 5 provides a hardware-accurate platform to validate algorithms, test motion planning approaches, and publish results that hold up under real-world scrutiny.

It was on Syncro 5 that Shreya and Akshay's project found its most meaningful test.

The Project: MonkeySee MonkeyDo -Teaching a Robot to Imitate Human Motion

The original goal was deceptively simple: build an interactive demo for IISc Open Day that could show visitors, including students with no robotics background what cyber-physical systems look like in practice. The idea was to capture a human's arm movement and have a robot replicate it in real time.

The first instinct was a wearable motion capture suit with LED markers. It worked in controlled conditions. But an open day has high visitor volume, varying user profiles, and no time for setup. The system needed to be walk-up-and-use. That constraint forced a better solution.

The Core Challenge

Three problems had to be solved simultaneously:

• Capturing human arm motion without any wearable hardware

• Translating that motion to a robot with different kinematics and fewer degrees of freedom

• Doing all of this in real time, intuitively, and at scale

Traditional joint-to-joint mapping fails when the robot and human don't share the same number of degrees of freedom. Most real robotic arms are underactuated; they have fewer joints than a human arm, making direct mapping impossible. A smarter approach was needed.

The Solution

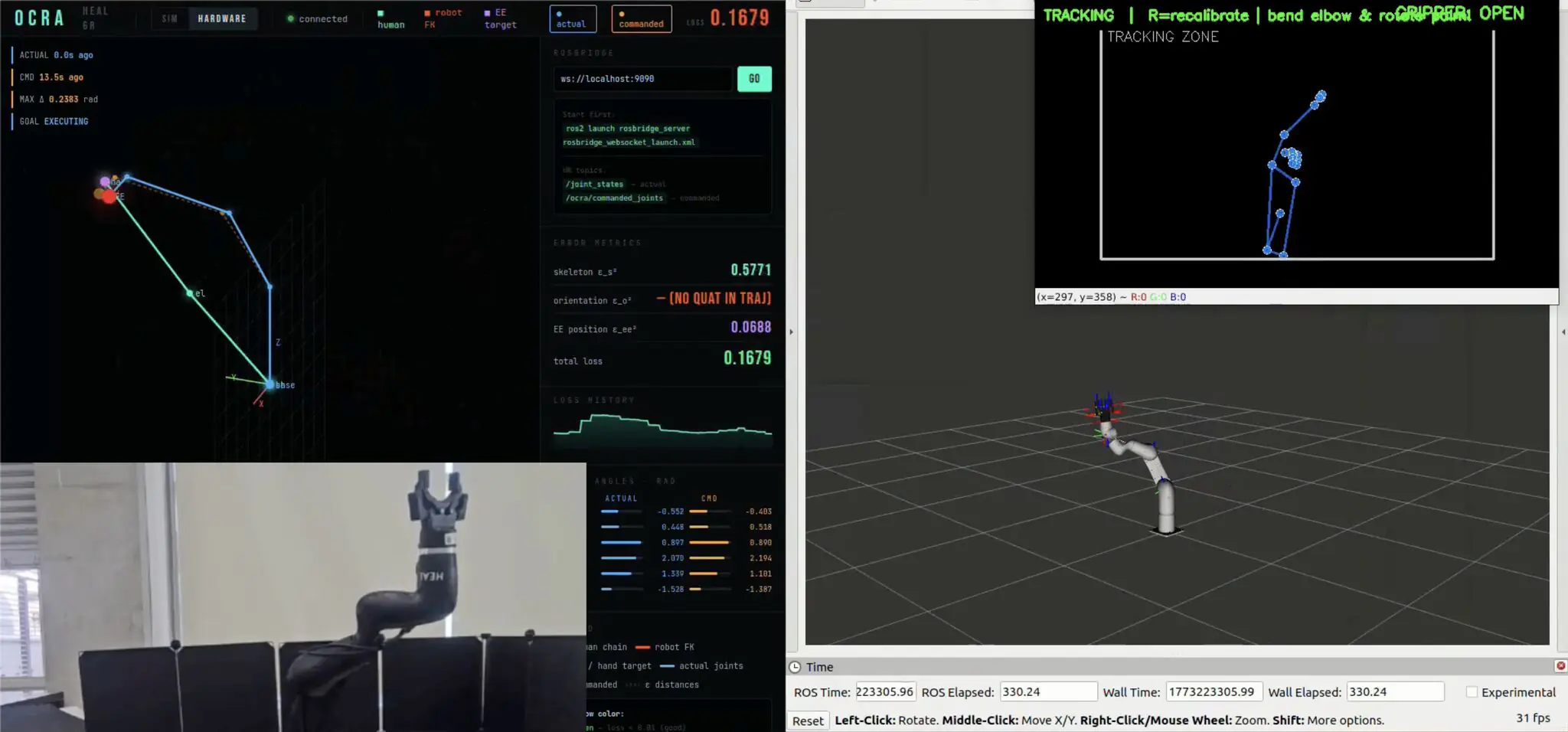

The team built a vision-based pipeline using a stereo depth camera and MediaPipe pose estimation to extract a real-time 3D skeletal model of the human arm - no wearables, no markers, no setup time.

For the translation problem, they implemented OCRA (Optimization-based Customizable Retargeting Algorithm) a system that doesn't try to match joints, but instead matches movement similarity. Think of it as virtual strings connecting corresponding segments of the human arm and the robot. The algorithm continuously adjusts the robot's posture to closely mirror the human's pose even when the robot has fewer joints to work with.

The full pipeline ran on ROS, with robot models in URDF, kinematics handled by PyRoki, and JAX powering the optimization running efficiently on a standard Intel CPU, this architecture also promises better performance on GPUs

Validated on Syncro 5

The system was tested on two robotic arms: an Interbotix RX200 for rapid prototyping, and Addverb's Syncro 5 (then called Heal) to validate performance in a more demanding, industrially relevant context. Both robots have fewer DOF than a human arm yet the same algorithm worked effectively on both.

One real-world challenge the team encountered: local minima in the optimization. If a human raised their arm straight up, the robot sometimes mirrored the geometric shape correctly but pointed its arm downward. Mathematically valid; functionally wrong. The fix was an additional constraint: end-effector orientation matching. Not just the shape of motion, but the direction and intent.

This is what distinguishes research done on real hardware. The problem only becomes visible when the robot moves.

Why This Research Matters - Beyond the Demo

MonkeySee MonkeyDo is now open source. But what it demonstrates has implications well beyond a single project:

• Intuitive Human-Robot Collaboration: Operators can guide robots through natural arm movement, reducing training time and complexity

• Learning from Demonstration (LfD): Robots can learn tasks by observing human demonstration, instead of being manually programmed step by step

• Flexible Automation: The same retargeting algorithm adapts across different robotic platforms, tested and confirmed

• Foundation for AI-Driven Robotics: The architecture lays groundwork for integration with reinforcement learning and adaptive control systems

The team is now developing a C++ implementation using Pinocchio and ProxSuite for faster computation and greater precision, moving the system closer to real-world deployment standards. The project is also open to issues and valid PR submissions for anyone willing to explore the same.

Are You Building Something with Robots? Tell Us.

Shreya and Akshay's project is the first in what we hope becomes a growing library of student and researcher work built on Addverb platforms. We're actively looking for more projects to feature and more institutions to collaborate with.

If you're a student or researcher working on a robotics project whether it's motion planning, perception, manipulation, human-robot interaction, or something else entirely we'd like to know about it.

What we're looking for:

• Projects built on or validated with real robotic hardware

• Work that connects to open-source libraries, publishable research, or industry application

• Students and labs interested in access to Addverb's robots, open-source tools on community.addverb.ai.

To share your project or start a conversation about collaboration, write to us at [email protected].

The robots are ready. The floor is open.

Technical Appendix: Architecture & Implementation Details

This section is intended for readers interested in the technical depth of the project. The open-source repository will be linked here as it becomes available.

Pose Estimation Pipeline

• Stereo depth camera for 3D spatial capture

• MediaPipe for real-time skeletal pose estimation

• Output: 3D joint positions of the human arm, streamed via ROS

OCRA: Optimization-Based Retargeting

• Spline-matching approach to compare motion structure, not individual joints

• Virtual string connections between human arm segments and robot links

• Optimization adjusts robot posture to minimise distance from human pose

• Joint-agnostic — works with underactuated robots (5 DOF and 6 DOF validated)

• End-effector orientation constraint added to resolve directional ambiguity

Software Stack

• ROS (Robot Operating System) — communication backbone

• URDF — robot model definition

• PyRoki — forward/inverse kinematics

• JAX — optimization backend (CPU-deployable)

Validation Platforms

• Interbotix RX200 (5 DOF) — rapid prototyping and initial testing

• Addverb Syncro 5 / Heal (6 DOF) — industrial validation

Roadmap

• C++ re-implementation using Pinocchio kinematics library

• ProxSuite for faster, more precise optimization

• Expected improvement: smoother motion, lower latency, real-time deployment readiness

Link to GitHub Library for this Project

Syncro 5 is available to academic institutions through Addverb's robotics education programme. For access, open-source libraries, and collaboration enquiries, visit community.addverb.ai or write to [email protected].